Collaborative Lifecycle Management performance report: Rational Quality Manager 6.0 release

Authors: Marco Antonio Ferreira de Araujo, Alfredo Bittencourt, Vaughn Rokosz Last updated: Jan 18, 2016 Build basis: Rational Quality Manager 6.0Introduction

This article examines the performance of Rational Quality Manager on small, virtual systems. It should not be used for sizing; please refer to the CLM Sizing Strategy for sizing guidance.The test methodology involves:

- Collecting the standard 20 minutes performance test data using user stages ranging from 100 to 1,000 concurrent users with 100 user increment intervals.

- Executing all use cases in a single test suite to define the limit of concurrent users based on this standard workload.

- Executing each use case individually to define the limit of concurrent users per scenario.

- Using a standard hardware and installation topology throughout the tests in order to evaluate the workload related to each user case and how they interact in a performance perspective. Further details about the configuration parameters can be found on Appendix A.

- All the detailed information is shown until the limiting user stage. All the data after the server is overloaded is not reliable as the server just becomes unresponsive.

Disclaimer

The information in this document is distributed AS IS. The use of this information or the implementation of any of these techniques is a customer responsibility and depends on the customers ability to evaluate and integrate them into the customers operational environment. While each item may have been reviewed by IBM for accuracy in a specific situation, there is no guarantee that the same or similar results will be obtained elsewhere. Customers attempting to adapt these techniques to their own environments do so at their own risk. Any pointers in this publication to external Web sites are provided for convenience only and do not in any manner serve as an endorsement of these Web sites. Any performance data contained in this document was determined in a controlled environment, and therefore, the results that may be obtained in other operating environments may vary significantly. Users of this document should verify the applicable data for their specific environment. Performance is based on measurements and projections using standard IBM benchmarks in a controlled environment. The actual throughput or performance that any user will experience will vary depending upon many factors, including considerations such as the amount of multi-programming in the users job stream, the I/O configuration, the storage configuration, and the workload processed. Therefore, no assurance can be given that an individual user will achieve results similar to those stated here. This testing was done as a way to compare and characterize the differences in performance between different versions of the product. The results shown here should thus be looked at as a comparison of the contrasting performance between different versions, and not as an absolute benchmark of performance.Methodology

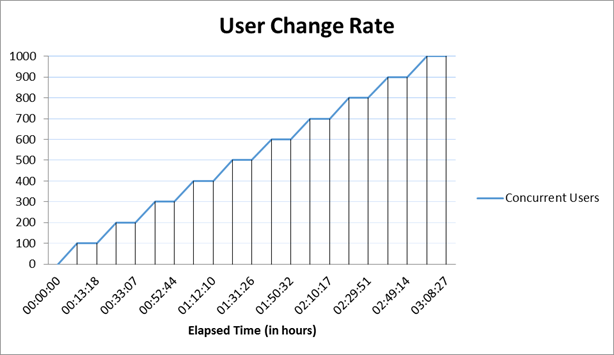

Rational Performance Tester (RPT) was used to simulate the workload created using the web client. Each user completed a random use case from a set of available use cases. A Rational Performance Tester script is created for each use case. The scripts are organized by pages and each page represents a user action. The work load is role based as each of the areas defined under sequence of actions which are separated into individual user groups within an RPT schedule. A new user starts its actions every 5 seconds until the number of users for that stage is achieved. There is five minutes settle time in order to avoid the workload generated by the user ramp up to interfere with the stage results. After the settle time, the user stage is executed for 20 minutes, where each user performs an use case at least two times in a random interval. When the stage is completed, RPT starts adding new users in order to move to the next stage. The limiting stage is defined by the last stage where the servers are presenting acceptable average response times, for this test defined as 2 seconds. The following graph illustrates the user load over time during the text execution:

User roles

User Roles| Use role | % of Total | Related Actions |

|---|---|---|

QE Manager |

8 |

Test plan create, Browse test plan and test case, Browse test script, Simple test plan copy, Defect search, View dashboard |

Test Lead |

19 |

Edit Test Environments, Edit test plan, Create test case, Bulk edit of test cases, Full text search, Browse test script, Test Execution, Defect search |

Tester |

68 |

Defect create, Defect modify, Defect search, Edit test case, Create test script, Edit test script, Test Execution, Browse test execution record |

Dashboard Viewer |

5 |

View dashboard(with login and logout) |

Page performance

The page performance is measured as mean value (or average) of its response time in the result data. For the majority of the pages under tests, there is little variation between runs, and the mean values are close to median in the sample for the load.Topology

Figure 1 illustrates the topology under test, which is based on Standard Topology (E1) Enterprise - Distributed / Linux / DB2. The specifications of machines under test are listed in the table below. Server tuning details listed in Appendix A

The specifications of machines under test are listed in the table below. Server tuning details listed in Appendix A

Function | Number of Machines | Machine Type | CPU / Machine | # of CPU | Memory/Machine | Disk | Disk capacity | Network interface | OS and Version |

|---|---|---|---|---|---|---|---|---|---|

Proxy Server (IBM HTTP Server and WebSphere Plugin) |

1 |

IBM System x3550 M4 |

4 x Intel Xeon |

4 |

4GB |

RAID 1 SAS Disk x 2 |

299GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.5 |

JTS Server |

1 |

IBM System x3550 M4 |

4 x Intel Xeon |

4 |

8GB |

RAID 5 SAS Disk x 2 |

897GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.5 |

QM Server |

1 |

IBM System x3550 M4 |

4 x Intel Xeon E5-2640 2.5GHz |

4 |

8GB |

RAID 5 SAS Disk x 2 |

897GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.5 |

Database Server |

1 |

IBM System x3650 M4 |

4 x Intel Xeon E5-2640 2.5GHz |

4 |

8GB |

RAID 5 SAS Disk x 2 |

2.4TB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.1 |

RPT workbench |

1 |

IBM System x3550 M4 |

4 x Intel Xeon E5-2640 2.5GHz |

4 |

8GB |

RAID 5 SAS Disk x 2 |

30GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.4 |

RPT Agents |

3 |

IBM System x3550 M4 |

4 x Intel Xeon E5-2640 2.5GHz |

4 |

8GB |

N/A |

30GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.5 |

Network switches |

N/A |

Cisco 2960G-24TC-L |

N/A |

N/A |

N/A |

N/A |

N/A |

Gigabit Ethernet |

24 Ethernet 10/100/1000 ports |

Network connectivity

All server machines and test clients are located on the same subnet. The LAN has 1000 Mbps of maximum bandwidth and less than 0.3 ms latency in ping.Data volume and shape

The artifacts were contained in one large project for a total of 579,142 artifacts. The repository contained the following data:- 50 test plans

- 30,000 test scripts

- 30,000 test cases

- 120,000 test case execution records

- 360,000 test case results

- 3,000 test suites

- 5,000 work items(defects)

- 200 test environments

- 600 test phases

- 30 build definitions

- 6,262 execution sequences

- 3,000 test suite execution records

- 15,000 test suite execution results

- 6,000 build records

- Database size = 15 GB

- QM index size = 1.3 GB

Full Workload Performance Result

Workload characterization

Test Cases| Use Role | Percentage of the user role | Sequence of Operations |

|---|---|---|

QE Manager |

1 |

Test plan create:user creates test plan, then adds description, business objectives, test objectives, 2 test schedules, test estimate quality objectives and entry and exit criteria. |

26 |

Browse test plans and test cases: user browses assets by: View Test Plans, then configure View Builder for name search; open test plan found, review various sections, then close. Search for test case by name, opens test case found, review various sections, then close. | |

26 |

Browse test script: user search for test script by name, open it, reviews it, then closes. | |

1 |

Simple test plan copy: user search test plan by name, then select one, then make a copy. | |

23 |

Defect search: user searches for specific defect by number, user reviews the defect (pause), then closes. | |

20 |

View Dashboard: user views dashboard | |

Test Lead |

8 |

Edit Test Environment: user lists all test environments, and then selects one of the environments and modifies it. |

15 |

Edit test plan: list all test plans; from query result, open a test plan for editing, add a test case to the test plan, a few other sections of the test plan are edited and then the test plan is saved. | |

4 |

Create test case: user create test case by: opening the Create Test Case page, enters data for a new test case, and then saves the test case. | |

1 |

Bulk edit of test cases: user searches for test cases with root name and edits all found with owner change. | |

3 |

Full text search: user does a full text search of all assets in repository using root name, then opens one of found items. | |

32 |

Browse test script: user search for test script by name, open it, reviews it, then closes. | |

26 |

Test Execution: selects View Test Execution Records, by name, starts execution, enters pass/fail verdict, reviews results, sets points then saves. | |

11 |

Defect search: user searches for specific defect by number, user reviews the defect (pause), then closes. | |

Tester |

8 |

Defect create: user creates defect by: opening the Create Defect page, enters data for a new defect, and then saves the defect. |

5 |

Defect modify: user searches for specific defect by number, modifies it then saves it. | |

14 |

Defect search: user searches for specific defect by number, user reviews the defect (pause), then closes. | |

6 |

Edit test case: user searches Test Case by name, the test case is then opened in the editor, then a test script is added to the test case (user clicks next a few times (server size paging feature) before selecting test script), The test case is then saved. | |

4 |

Create test script: user creates test case by: selecting Create Test Script page, enters data for a new test script, and then saves the test script. | |

8 |

Edit test script: user selects Test Script by name. test script then opened for editing, modified and then saved. | |

42 |

Test Execution: selects View Test Execution Records, by name, starts execution, enters pass/fail verdict, reviews results, sets points then saves. | |

7 |

Browse test execution record: user browses TERs by: name, then selects the TER and opens the most recent results. | |

Dashboard Viewer |

100 |

View dashboard(with login and logout): user logs in, views dashboard, then logs out. This user provides some login/logout behavior to the workload |

Average page response time

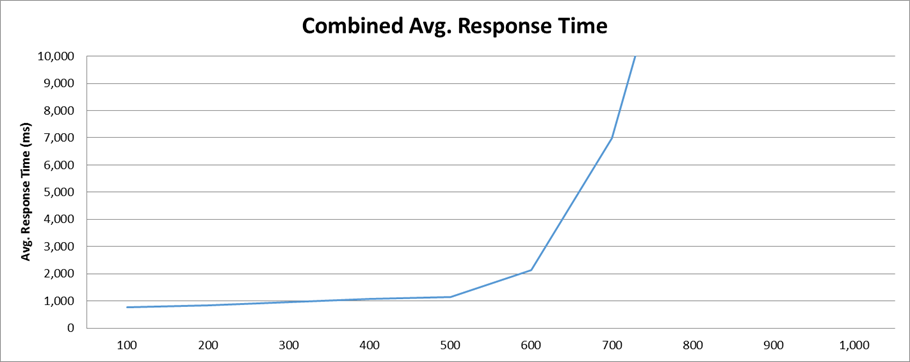

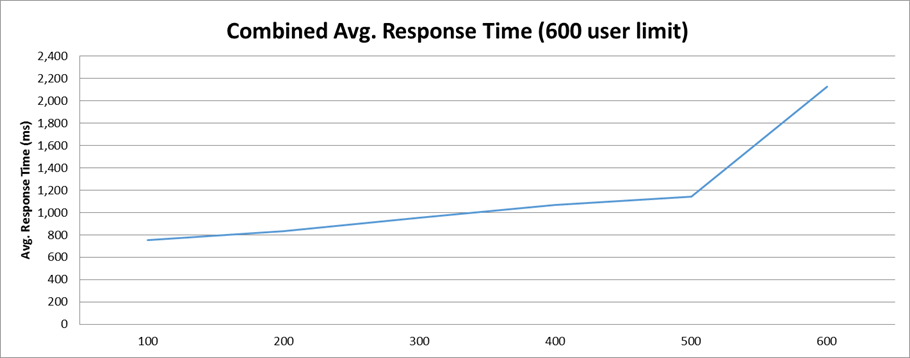

This load test reproduced an environment under full load reaching 1000 concurrent users. The following graph shows the average page response time (in milliseconds) ranging from 100 to 1000 concurrent users in 100 user increment intervals. At the 600 user stage the average response time starts to increase rapidly, indicating that the server reached its limit. The combined work order graphs shows the average response time calculated based on all the steps in this execution. This test requested an average of 81.56 elements/second and 10.96 pages/second at the 600 user stage, its maximum capacity. The average response time for all pages was 853.26 ms, jumping to 2,656.64 ms when overloaded at 700 user stage.

This test requested an average of 81.56 elements/second and 10.96 pages/second at the 600 user stage, its maximum capacity. The average response time for all pages was 853.26 ms, jumping to 2,656.64 ms when overloaded at 700 user stage.

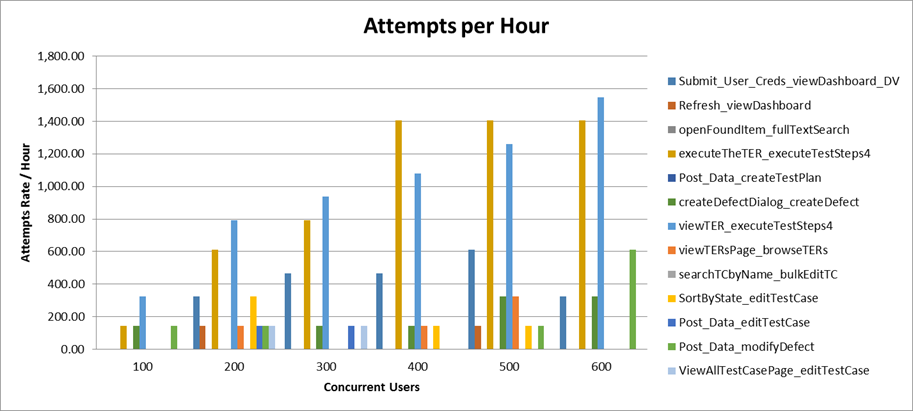

Performance result breakdown

The following graph shows the average step response time for each user stage in milliseconds. Each step is part of a test case listed on the table Test Cases. In this case, a lower number is better. The average number of operations per hour during each user stage for each user case.

The average number of operations per hour during each user stage for each user case.

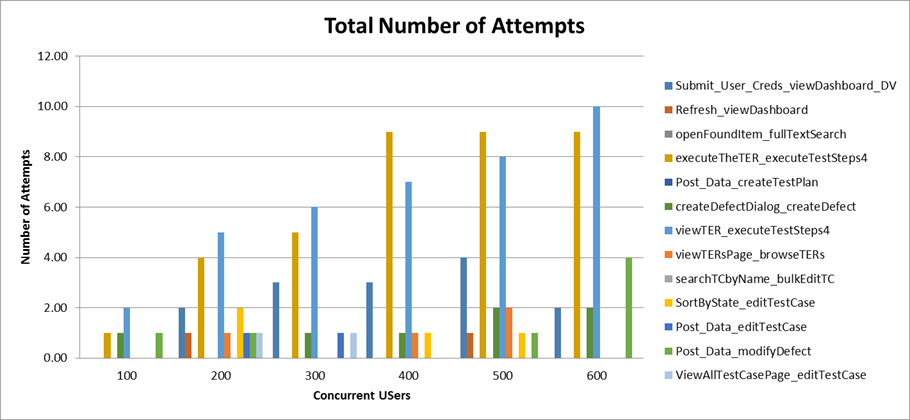

Total number of iterations user is performing during the test for each user case.

Total number of iterations user is performing during the test for each user case.

Detailed resource utilization

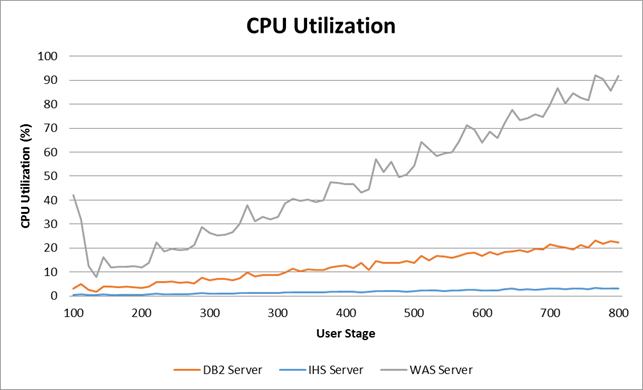

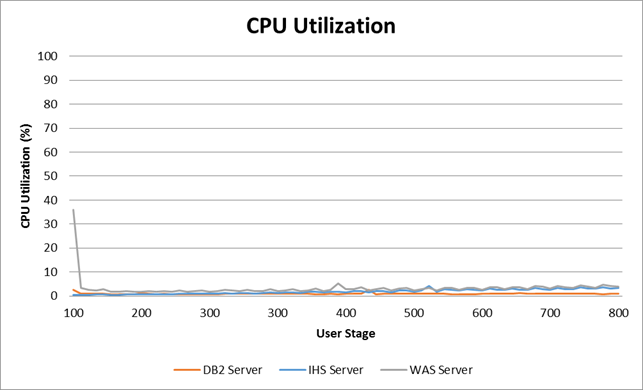

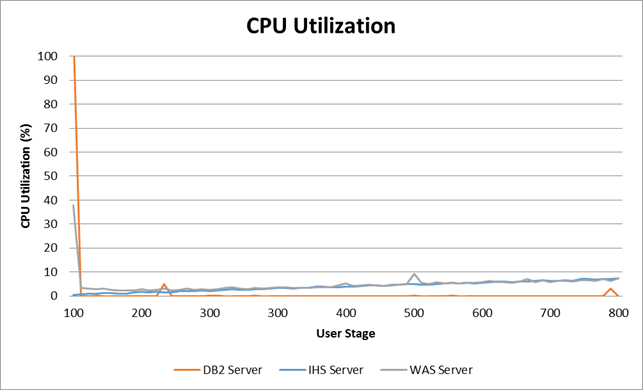

The limiting resource was the Application Server, which reached 71.26% maximum CPU utilization, with an average of 63.67% utilization at this stage. The database had an average of 16.68% CPU utilization, and the HTTP Server only 2.35% average CPU utilization. The following graph shows the average memory utilization for each server until the limiting stage.

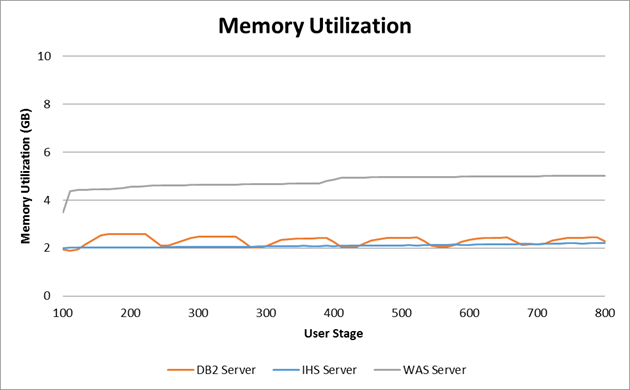

The following graph shows the average memory utilization for each server until the limiting stage.

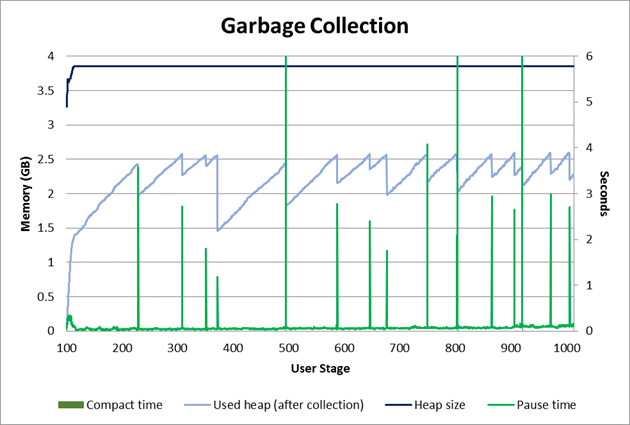

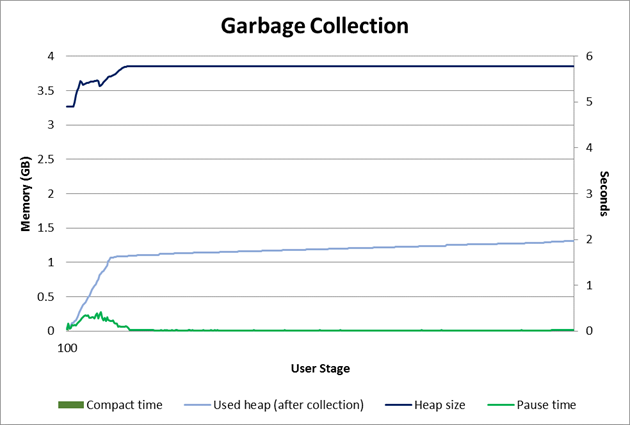

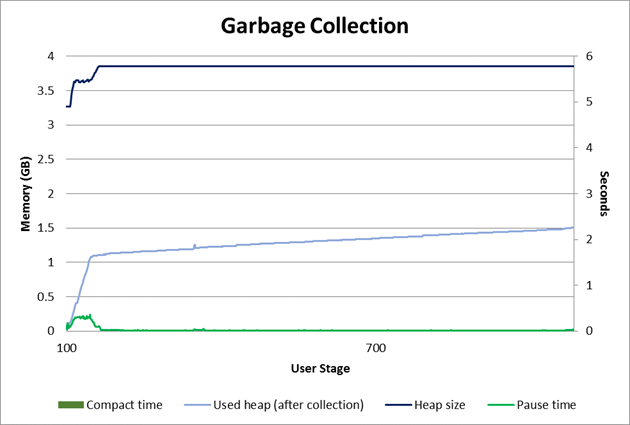

The next graph shows the garbage collector behavior during the test. Detailed garbage collector configuration can be found in Appendix A.

The next graph shows the garbage collector behavior during the test. Detailed garbage collector configuration can be found in Appendix A.

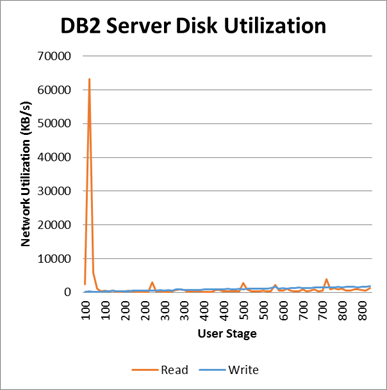

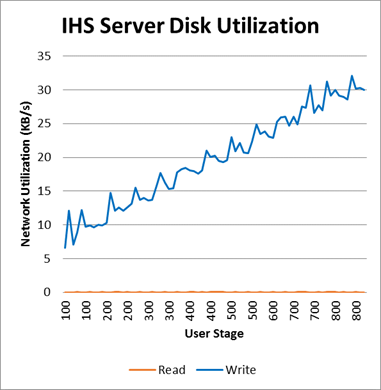

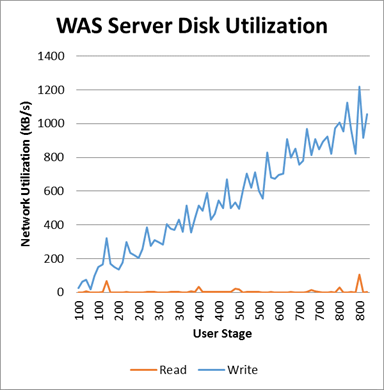

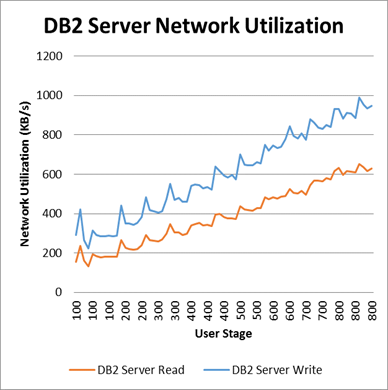

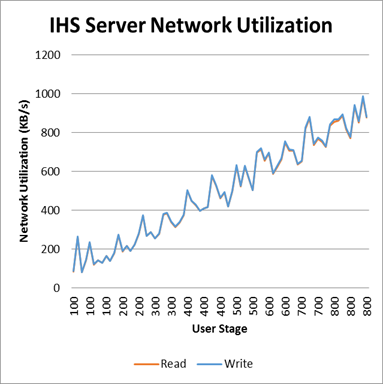

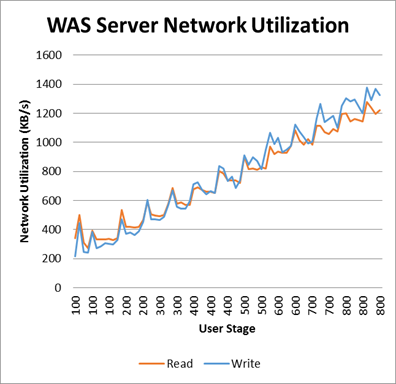

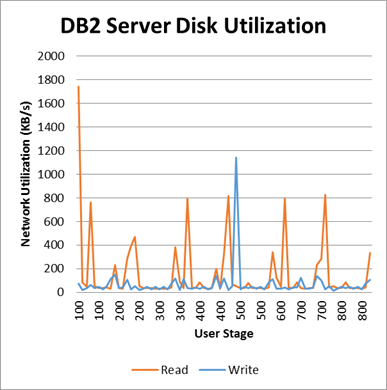

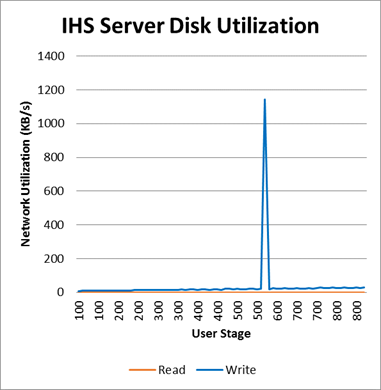

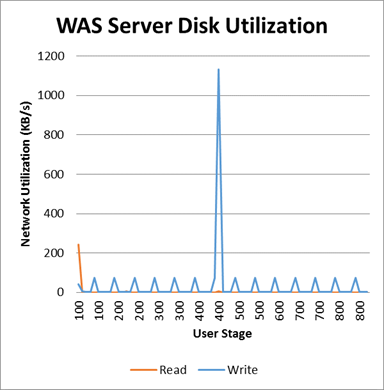

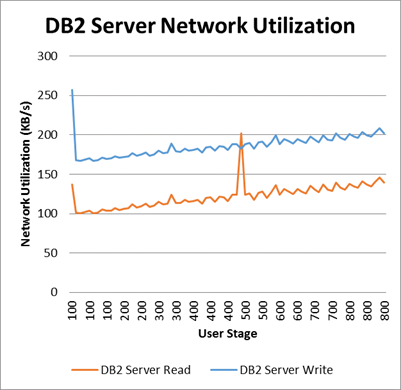

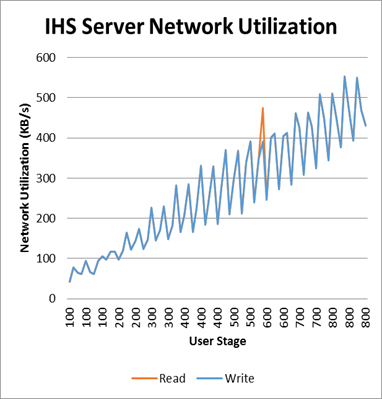

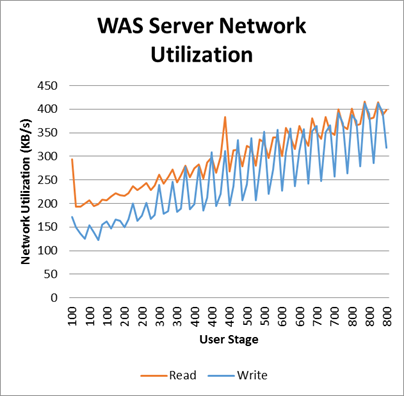

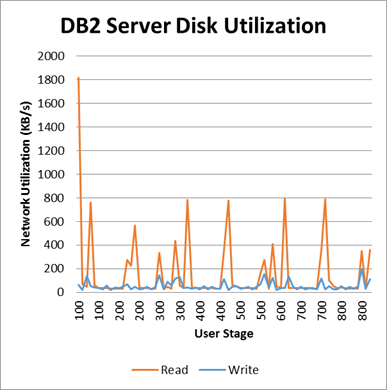

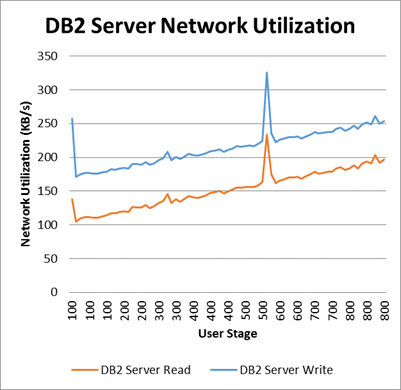

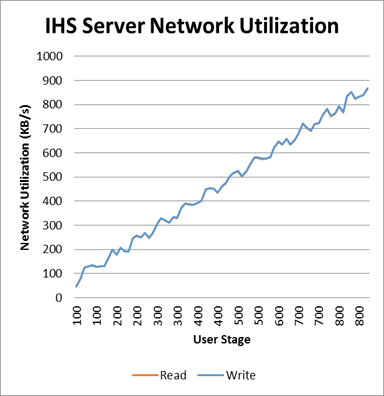

Additional information regarding disk and network for each server during the test can be found on the following graphs.

Additional information regarding disk and network for each server during the test can be found on the following graphs.

Browse TPs TCs Performance Result

Workload characterization

Test Cases| Use role | % of Total | Related Actions |

|---|---|---|

QE Manager |

100 |

Browse test plans and test cases |

Average page response time

This load test reproduced an environment under full load reaching 1000 concurrent users. The following graph shows the average page response time (in milliseconds) ranging from 100 to 1000 concurrent users in 100 user increment intervals. The server never reached its limit during the test. The combined work order graphs shows the average response time calculated based on all the steps in this execution. This test requested an average of 102.31 elements/second and 1.11 pages/second at the 1000 user stage. The average response time for all pages reached 103.02 milliseconds.

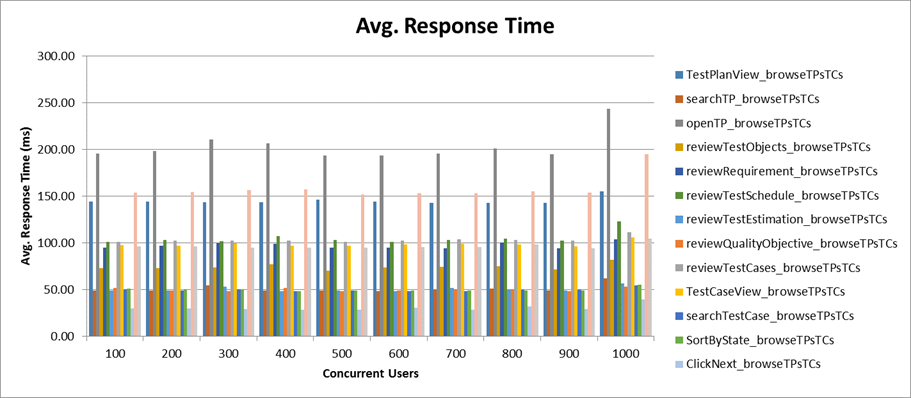

Performance result breakdown

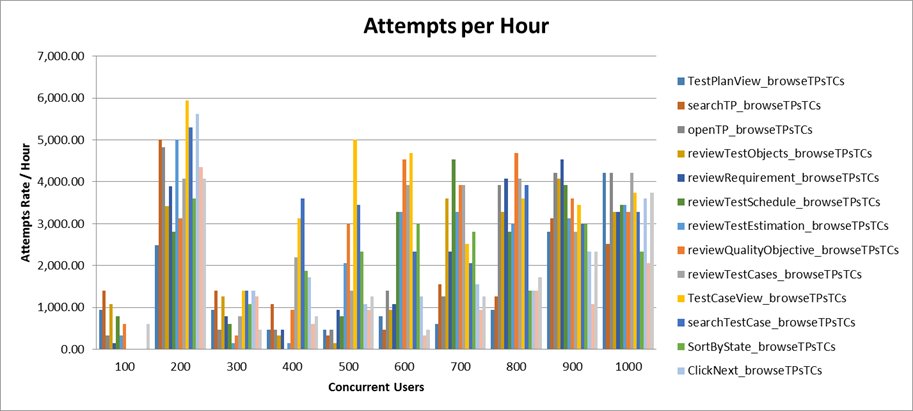

The following graph shows the average step response time for each user stage in milliseconds. Each step is part of a test case listed on the table Test Cases. In this case, a lower number is better. The average number of operations per hour during each user stage for each user case.

The average number of operations per hour during each user stage for each user case.

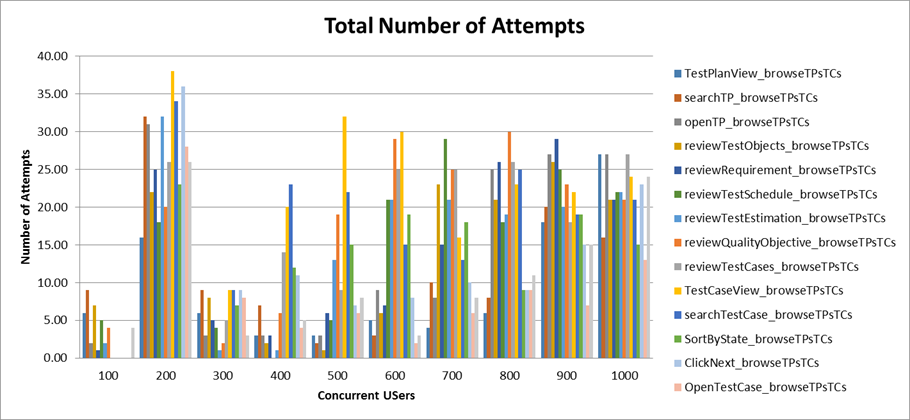

Total number of iterations user is performing during the test for each user case.

Total number of iterations user is performing during the test for each user case.

Detailed resource utilization

The Database Server reached 2.68% maximum CPU utilization, with an average of 1.24% utilization at the 1000 concurrent users stage. The Application Server had an average of 3.03% CPU utilization, and the HTTP Server 2.00% average CPU utilization. The following graph shows the average memory utilization for each server until the limiting stage.

The following graph shows the average memory utilization for each server until the limiting stage.

The next graph shows the garbage collector behavior during the test. Detailed garbage collector configuration can be found in Appendix A.

The next graph shows the garbage collector behavior during the test. Detailed garbage collector configuration can be found in Appendix A.

Additional information regarding disk and network for each server during the test can be found on the following graphs.

Additional information regarding disk and network for each server during the test can be found on the following graphs.

Browse Test Execution (4 Steps) Performance Result

Workload characterization

Test Cases| Use role | % of Total | Related Actions |

|---|---|---|

Tester |

100 |

Test execution for Steps 4 |

Average page response time

This load test reproduced an environment under full load reaching 1000 concurrent users. The following graph shows the average page response time (in milliseconds) ranging from 100 to 1000 concurrent users in 100 user increment intervals. The server never reached its limit during the test. The combined work order graphs shows the average response time calculated based on all the steps in this execution. This test requested an average of 252.5 elements/second and 2.08 pages/second at the 1000 user stage. The average response time for all pages reached 128.29 milliseconds.

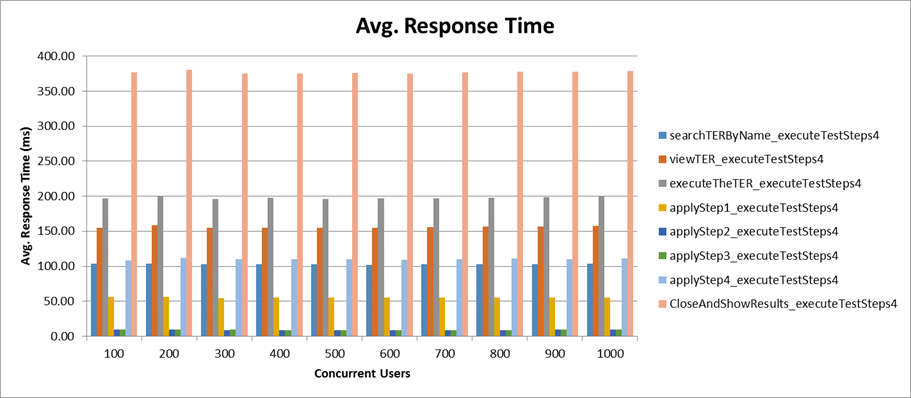

Performance result breakdown

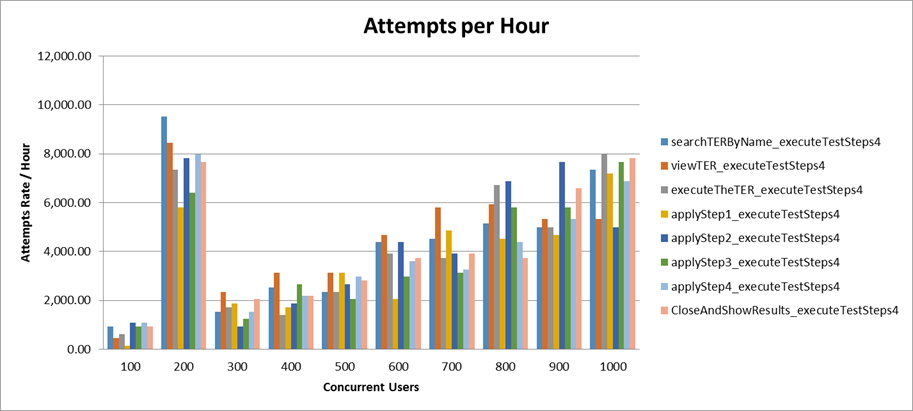

The following graph shows the average step response time for each user stage in milliseconds. Each step is part of a test case listed on the table Test Cases. In this case, a lower number is better. The average number of operations per hour during each user stage for each user case.

The average number of operations per hour during each user stage for each user case.

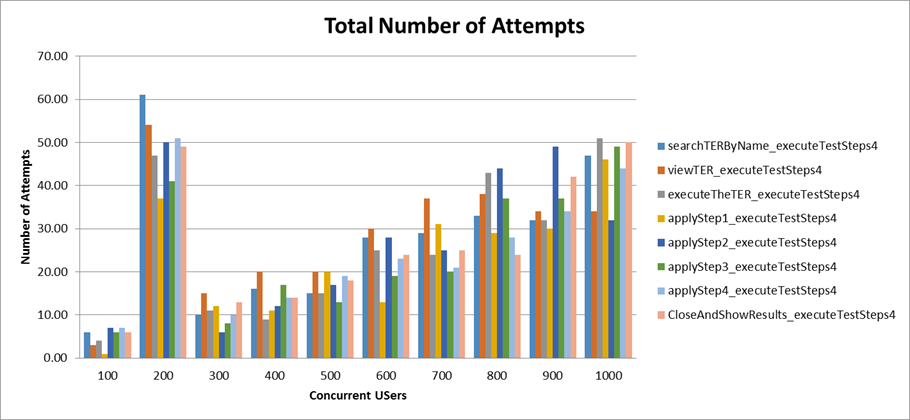

Total number of iterations user is performing during the test for each user case.

Total number of iterations user is performing during the test for each user case.

Detailed resource utilization

The Database Server reached 0.9% maximum CPU utilization, with an average of 0.93% utilization at the 1000 concurrent users stage. The Application Server had an average of 4.42% CPU utilization, and the HTTP Server 4.26% average CPU utilization. The following graph shows the average memory utilization for each server until the limiting stage.

The following graph shows the average memory utilization for each server until the limiting stage.

The next graph shows the garbage collector behavior during the test. Detailed garbage collector configuration can be found in Appendix A.

The next graph shows the garbage collector behavior during the test. Detailed garbage collector configuration can be found in Appendix A.

Additional information regarding disk and network for each server during the test can be found on the following graphs.

Additional information regarding disk and network for each server during the test can be found on the following graphs.

Appendix A - Key configuration parameters

| Product | Version | Highlights for configurations under test |

|---|---|---|

IBM HTTP Server for WebSphere Application Server |

8.5.5.1 |

IBM HTTP Server functions as a reverse proxy server implemented via Web server plug-in for WebSphere Application Server. Configuration details can be found from the CLM infocenter. HTTP server (httpd.conf):

OS Configuration:

|

IBM WebSphere Application Server Network Deployment |

8.5.5.1 |

JVM settings:

-Xgcpolicy:gencon -Xmx4g -Xms4g -Xmn1500m -Xcompressedrefs -Xgc:preferredHeapBase=0x100000000 -Xverbosegclog:gcJVM.log -XX:MaxDirectMemorySize=1g Thread pools:

LTPA Authentication:

OS Configuration: System wide resources for the app server process owner:

|

DB2 |

ESE 10.5.3 |

DB2 Connection pool size (set on JTS):

DBM CFG:

|

LDAP server |

N/A |

|

License server |

N/A |

|

RPT workbench |

8.3.0.3 |

Defaults |

RPT agents |

8.3.0.3 |

Defaults |

Network |

Shared subnet within test lab |

About the authors:

- Marco Antonio Ferreira de Araujo is a performance specialist for CLM, Maximo, and TRIRIGA product families.

- Alfredo Bittencourt is a performance specialist for CLM, Maximo, and TRIRIGA product families.

- Vaughn Rokosz is the performance lead for the CLM product family.

Related topics: Collaborative Lifecycle Management performance report: Rational Quality Manager 5.0 release, Performance datasheets

Questions and comments:

- What other performance information would you like to see here?

- Do you have performance scenarios to share?

- Do you have scenarios that are not addressed in documentation?

- Where are you having problems in performance?

Contributions are governed by our Terms of Use. Please read the following disclaimer.

Dashboards and work items are no longer publicly available, so some links may be invalid. We now provide similar information through other means. Learn more here.