Introduction

This report compares the performance of

Rational Quality Manager (RQM) version 6.0 to the previous 5.0 release. The goal of the test was the verify that the performance of the 6.0 operations were the same or better than their 5.0.2 equivalents.

The test methodology involves these steps:

- Collect the standard one-hour performance test data using 1,000 concurrent users from RQM 5.0 (repeat 3 times)

- Repeat the same standard one-hour performance test load against RQM 6.0 using 1,000 concurrent users (repeat 3 times)

- Compare the 6 runs

The 6.0 tests do not include any of the new 6.0 features (such as the configuration management capabilities), which allows the performance workload and data repositories to be the same for 5.0.2 and 6.0.

Disclaimer

The information in this document is distributed AS IS. The use of this information or the implementation of any of these techniques is a customer responsibility and depends on the customers ability to evaluate and integrate them into the customers operational environment. While each item may have been reviewed by IBM for accuracy in a specific situation, there is no guarantee that the same or similar results will be obtained elsewhere. Customers attempting to adapt these techniques to their own environments do so at their own risk. Any pointers in this publication to external Web sites are provided for convenience only and do not in any manner serve as an endorsement of these Web sites. Any performance data contained in this document was determined in a controlled environment, and therefore, the results that may be obtained in other operating environments may vary significantly. Users of this document should verify the applicable data for their specific environment.

Performance is based on measurements and projections using standard IBM benchmarks in a controlled environment. The actual throughput or performance that any user will experience will vary depending upon many factors, including considerations such as the amount of multi-programming in the users job stream, the I/O configuration, the storage configuration, and the workload processed. Therefore, no assurance can be given that an individual user will achieve results similar to those stated here.

This testing was done as a way to compare and characterize the differences in performance between different versions of the product. The results shown here should thus be looked at as a comparison of the contrasting performance between different versions, and not as an absolute benchmark of performance.

Summary of performance results

Most operations in 6.0 are equivalent or better than their

5.0 equivalents. The chart below is a high-level summary, showing a histogram of the absolute time differences (in milliseconds) between the 6.0 and 5.0 operations. We consider "equivalent" to be if the response time difference when comparing an operation between the two releases is within 100 ms. Out of the 97 operations we measured, all but one are equivalent or better. 20 show significant improvement.

There are improvements in dashboard loading, test plan loading, and editing of various artifacts.

The one operation which has degraded is still relatively fast (around 1 second total):

There is a defect tracking this issue, and it is under consideration to be addressed in future releases.

For more details on the 1000 user workload, see this section:

User roles, test cases and workload characterization .

For the detailed performance results for each use case, see this section:

Detailed performance results.

Summary of OS resource utilization

Comparing system resource data for both 6.0 release and

5.0, 6.0 has

- Slightly higher CPU on application server than 5.0

- Reduced disk and CPU utilization on DB server than 5.0

- Higher system memory consumptions on both DB and application servers than 5.0

- Similar network I/O with 5.0

The details are provided in section

Resource utilization

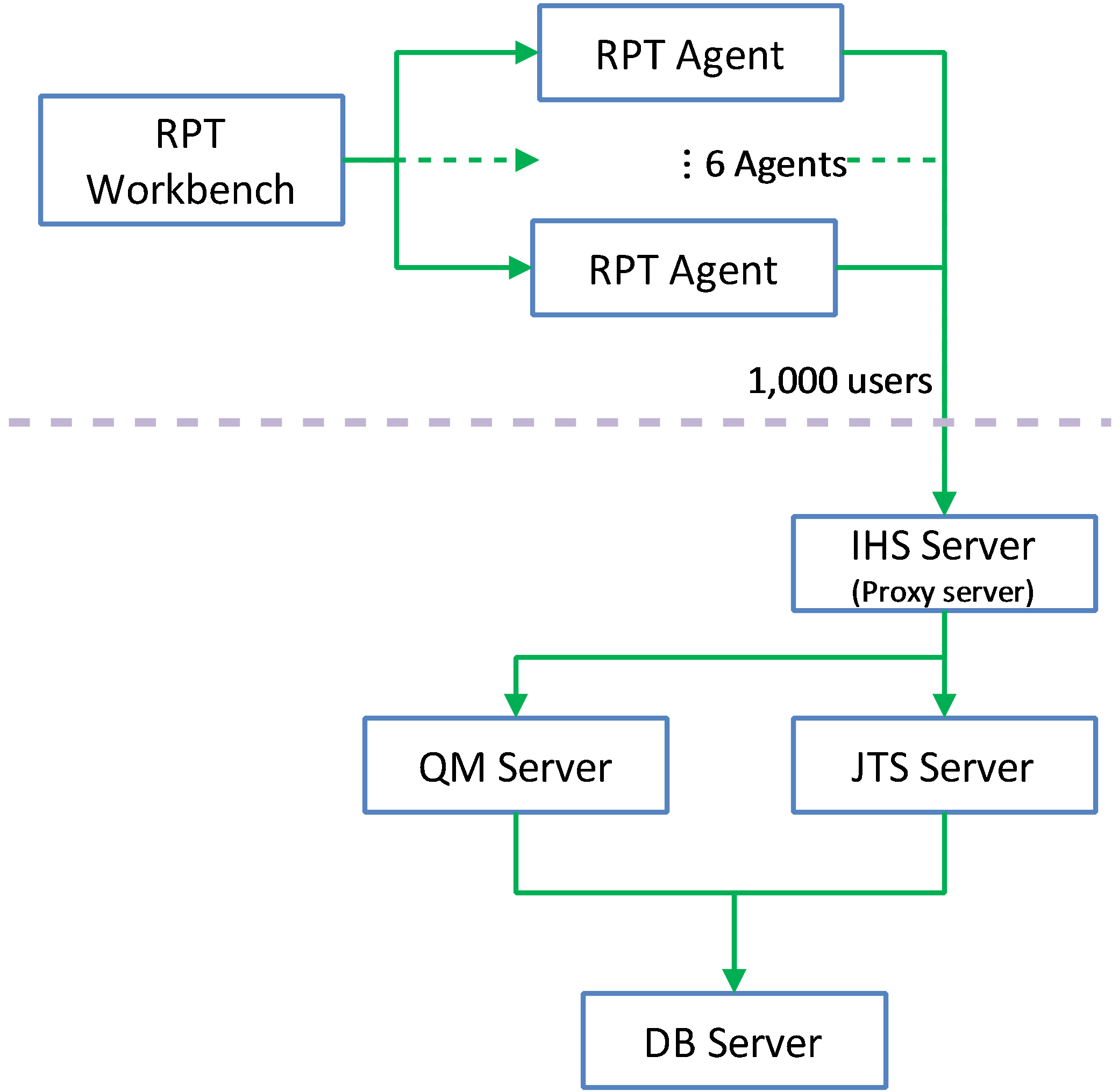

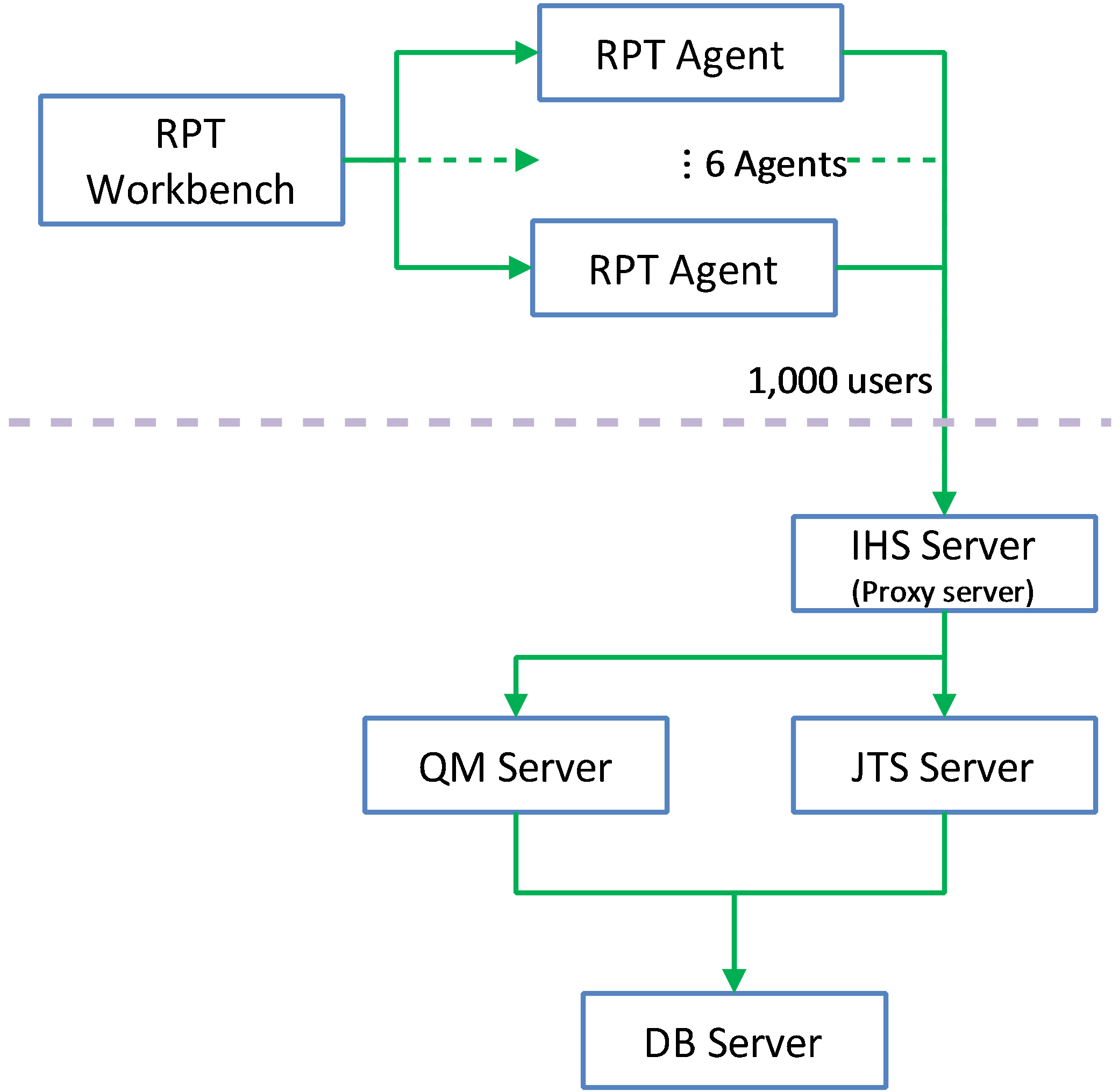

Appendix A: Topology

The topology under test is based on

Standard Topology (E1) Enterprise - Distributed / Linux / DB2.

The specifications of machines under test are listed in the table below. Server tuning details listed in

Appendix A

| Function |

Number of Machines |

Machine Type |

CPU / Machine |

Total # of CPU vCores/Machine |

Memory/Machine |

Disk |

Disk capacity |

Network interface |

OS and Version |

| Proxy Server (IBM HTTP Server and WebSphere Plugin) |

1 |

IBM System x3250 M4 |

1 x Intel Xeon E3-1240 3.4GHz (quad-core) |

8 |

16GB |

RAID 1 -- SAS Disk x 2 |

299GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.5 |

| JTS Server |

1 |

IBM System x3550 M4 |

2 x Intel Xeon E5-2640 2.5GHz (six-core) |

24 |

32GB |

RAID 5 -- SAS Disk x 2 |

897GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.5 |

| QM Server |

1 |

IBM System x3550 M4 |

2 x Intel Xeon E5-2640 2.5GHz (six-core) |

24 |

32GB |

RAID 5 -- SAS Disk x 2 |

897GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.5 |

| Database Server |

1 |

IBM System x3650 M4 |

2 x Intel Xeon E5-2640 2.5GHz (six-core) |

24 |

64GB |

RAID 5 -- SAS Disk x 2 |

2.4TB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.1 |

| RPT workbench |

1 |

IBM System x3550 M4 |

2 x Intel Xeon E5-2640 2.5GHz (six-core) |

24 |

32GB |

RAID 5 -- SAS Disk x 2 |

897GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.4 |

| RPT Agents |

6 |

VM image |

4 x Intel Xeon X5650 CPU (1-Core 2.67GHz) |

1 |

2GB |

N/A |

30GB |

Gigabit Ethernet |

Red Hat Enterprise Linux Server release 6.5 |

| Network switches |

N/A |

Cisco 2960G-24TC-L |

N/A |

N/A |

N/A |

N/A |

N/A |

Gigabit Ethernet |

24 Ethernet 10/100/1000 ports |

N/A: Not applicable.

vCores = Cores with hyperthreading

Network connectivity

All server machines and test clients are located on the same subnet. The LAN has 1000 Mbps of maximum bandwidth and less than 0.3 ms latency in ping.

Data volume and shape

The artifacts were migrated from 5.0GA repository which contains a total of 579,142 artifacts in

one large project.

The repository contained the following data:

- 60 test plans

- 30,000 test scripts

- 30,000 test cases

- 120,000 test case execution records

- 360,000 test case results

- 3,000 test suites

- 5,000 work items(defects)

- 200 test environments

- 600 test phases

- 30 build definitions

- 6,262 execution sequences

- 3,000 test suite execution records

- 15,000 test suite execution results

- 6,000 build records

- QM Database size = 12 GB

- QM index size = 1.5 GB

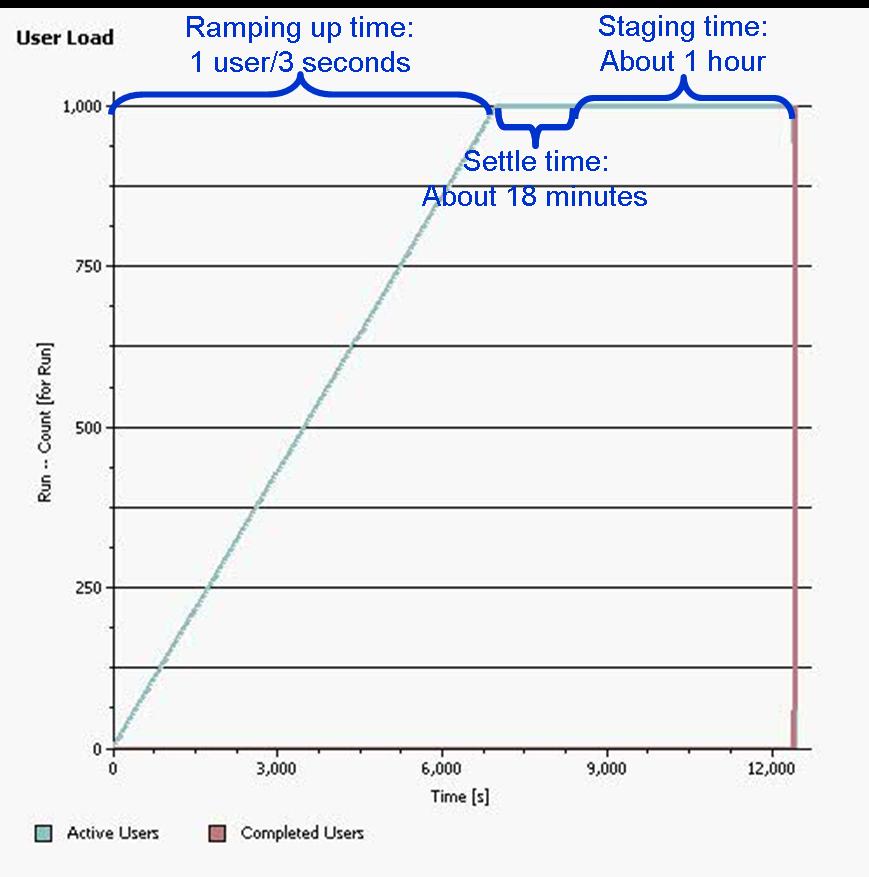

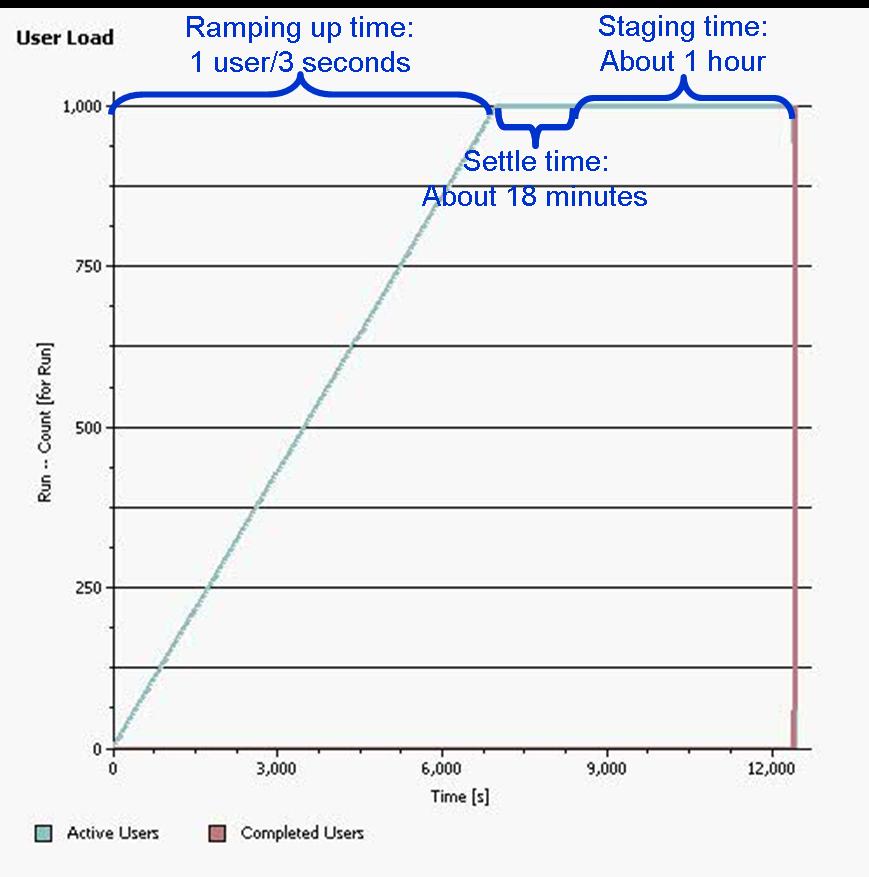

Appendix B: Methodology

Rational Performance Tester(RPT) was used to simulate the workload created using the web client. Each user completed a random use case from a set of

available use cases. A Rational Performance Tester script is created for each use case. The scripts are organized by pages and each page represents a user action.

The work load is role based as each of the areas defined under sequence of actions which are separated into individual user groups within an RPT schedule.

The settings of the RPT schedule is shown below:

User roles, test cases and workload characterization

User Roles

| Use role |

% of Total |

Related Actions |

| QE Manager |

8 |

Test plan create, Browse test plan and test case, Browse test script, Simple test plan copy, Defect search, View dashboard |

| Test Lead |

19 |

Edit Test Environments, Edit test plan, Create test case, Bulk edit of test cases, Full text search, Browse test script, Test Execution, Defect search |

| Tester |

68 |

Defect create, Defect modify, Defect search, Edit test case, Create test script, Edit test script, Test Execution, Browse test execution record |

| Dashboard Viewer |

5 |

View dashboard(with login and logout) |

Test Cases

| Use Role |

Percentage of the user role |

Sequence of Operations |

| QE Manager |

1 |

Test plan create:user creates test plan, then adds description, business objectives, test objectives, 2 test schedules, test estimate quality objectives and entry and exit criteria. |

| 26 |

Browse test plans and test cases: user browses assets by: View Test Plans, then configure View Builder for name search; open test plan found, review various sections, then close. Search for test case by name, opens test case found, review various sections, then close. |

| 26 |

Browse test script: user search for test script by name, open it, reviews it, then closes. |

| 1 |

Simple test plan copy: user search test plan by name, then select one, then make a copy. |

| 23 |

Defect search: user searches for specific defect by number, user reviews the defect (pause), then closes. |

| 20 |

View Dashboard: user views dashboard |

| Test Lead |

8 |

Edit Test Environment: user lists all test environments, and then selects one of the environments and modifies it. |

| 15 |

Edit test plan: list all test plans; from query result, open a test plan for editing, add a test case to the test plan, a few other sections of the test plan are edited and then the test plan is saved. |

| 4 |

Create test case: user create test case by: opening the Create Test Case page, enters data for a new test case, and then saves the test case. |

| 1 |

Bulk edit of test cases: user searches for test cases with root name and edits all found with owner change. |

| 3 |

Full text search: user does a full text search of all assets in repository using root name, then opens one of found items. |

| 32 |

Browse test script: user search for test script by name, open it, reviews it, then closes. |

| 26 |

Test Execution: selects View Test Execution Records, by name, starts execution, enters pass/fail verdict, reviews results, sets points then saves. |

| 11 |

Defect search: user searches for specific defect by number, user reviews the defect (pause), then closes. |

| Tester |

8 |

Defect create: user creates defect by: opening the Create Defect page, enters data for a new defect, and then saves the defect. |

| 5 |

Defect modify: user searches for specific defect by number, modifies it then saves it. |

| 14 |

Defect search: user searches for specific defect by number, user reviews the defect (pause), then closes. |

| 6 |

Edit test case: user searches Test Case by name, the test case is then opened in the editor, then a test script is added to the test case (user clicks next a few times (server size paging feature) before selecting test script), The test case is then saved. |

| 4 |

Create test script: user creates test case by: selecting Create Test Script page, enters data for a new test script, and then saves the test script. |

| 8 |

Edit test script: user selects Test Script by name. test script then opened for editing, modified and then saved. |

| 42 |

Test Execution: selects View Test Execution Records, by name, starts execution, enters pass/fail verdict, reviews results, sets points then saves. |

| 7 |

Browse test execution record: user browses TERs by: name, then selects the TER and opens the most recent results. |

| Dashboard Viewer |

100 |

View dashboard(with login and logout): user logs in, views dashboard, then logs out. This user provides some login/logout behavior to the workload |

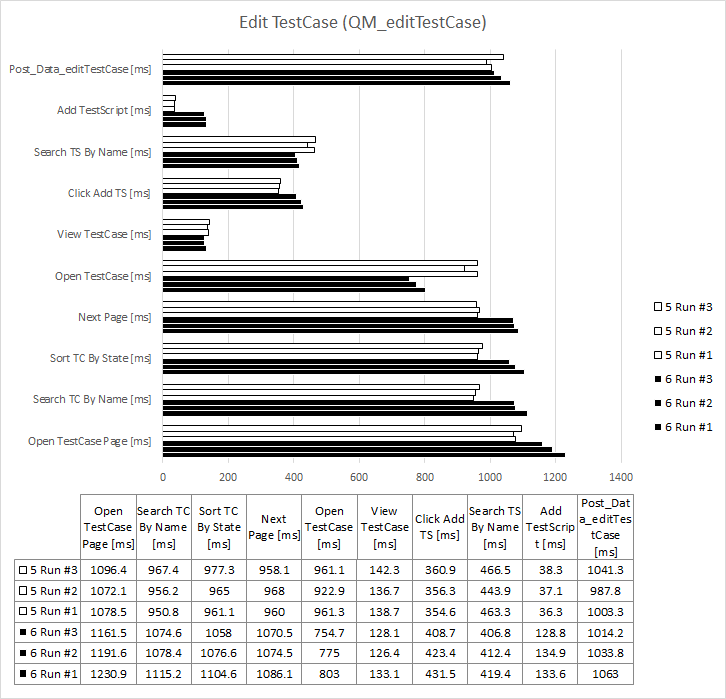

Response time comparison

The page performance is measured as mean value (or average) of its response time in the result data. For the majority of the pages under tests, there is little variation between runs, and the mean values are close to median in the sample for the load.

Appendix C: Detailed performance results

Average page response time comparison breakdown

NOTE

For all usecase comparison charts, the unit is millisecond, and for the data, smaller is better.

Create Defect

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Create Test Plan

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Create Test Case

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Create Test Script

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Browse Test Plans & Test Cases

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Browse Test Scripts

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Bulk Edit of Test Cases

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Defect Search

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Defect Modify

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

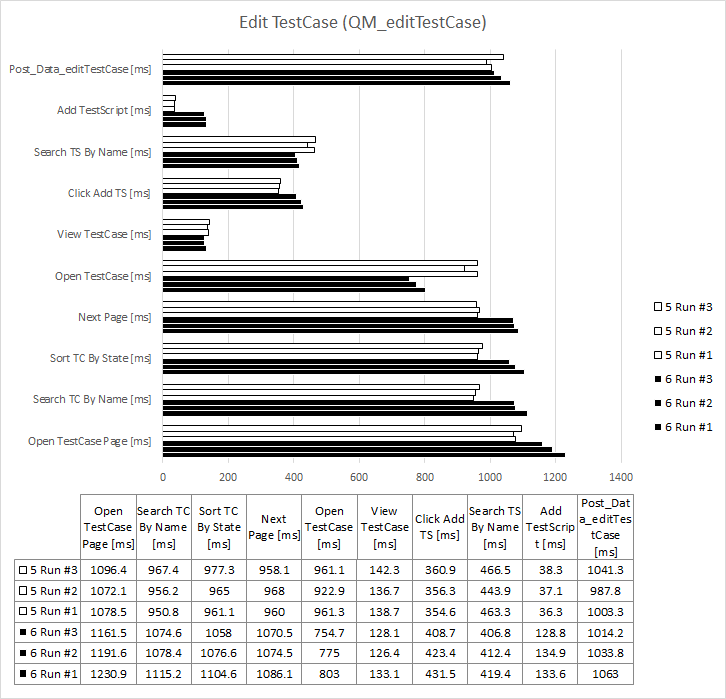

Edit Test Case

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Edit Test Environment

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Edit Test Plan

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Edit Test Script

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Full Text Search

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Simple Test Plan Copy

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Test Execution For 4 Steps

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

Test Execution Record Browsing

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

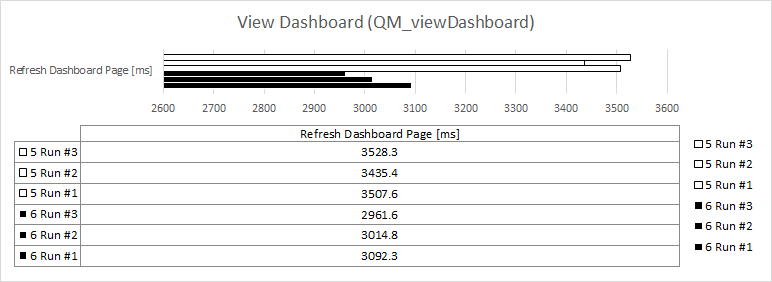

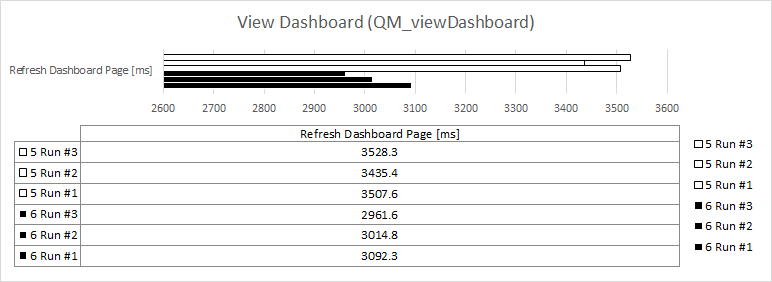

View Dashboard

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

View Dashboard with Login

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization

RPT network transfer comparison

RPT script execution comparison

RPT script executions 6.0 vs 5.0 - per hour

| Page name |

v6.0 counts |

v5.0 counts |

| Login_Prompt_viewDashboard_DV |

504 |

499 |

| Submit_User_Creds_viewDashboard_DV |

496 |

495 |

| Logout_viewDashboard_DV |

498 |

497 |

| View TestScript Page |

205 |

207 |

| InsertImage4 |

206 |

209 |

| InsertImage2 |

209 |

207 |

| InsertImage3 |

209 |

204 |

| InsertImage1 |

214 |

212 |

| Post_Data_editTestScript |

219 |

215 |

| Search Scripts By Name |

205 |

208 |

| Sort By Modified |

203 |

205 |

| Click Next - Edit Test Script |

203 |

209 |

| Open Selected TestScript |

205 |

212 |

| Open TestPlan Page |

165 |

168 |

| Search TestPlan By Name |

162 |

169 |

| Open Test Plan |

161 |

172 |

| Post_Data_editTestPlan |

160 |

165 |

| Open LabManagement Page |

77 |

90 |

| Search Test Environment |

76 |

86 |

| Post_Data_editTestEnv |

71 |

86 |

| Update Configuration |

73 |

84 |

| Open TER Page |

1,447 |

1,460 |

| Search TER By Name |

1,456 |

1,478 |

| Execute The TER |

1,459 |

1,462 |

| Execute Step1 |

1,445 |

1,439 |

| Execute Step2 |

1,423 |

1,453 |

| Execute Step4 |

1,420 |

1,463 |

| Execute Step3 |

1,430 |

1,462 |

| Show Results |

1,429 |

1,461 |

| Open TestCase Page |

170 |

170 |

| Sort TC By State |

173 |

170 |

| Search TC By Name |

172 |

171 |

| Next Page |

174 |

169 |

| Open TestCase |

170 |

172 |

| View TestCase |

165 |

165 |

| Click Add TS |

167 |

163 |

| Search TS By Name |

166 |

158 |

| Add TestScript |

166 |

158 |

| Post_Data_editTestCase |

153 |

151 |

| ViewTestScript |

498 |

467 |

| SearchTestScripts |

499 |

463 |

| SortTestScripts |

496 |

462 |

| ClickNext - Browse TestScript |

501 |

466 |

| OpenTestScript |

499 |

468 |

| CCM WI Home |

160 |

132 |

| Open Defect - Modify Defect |

161 |

133 |

| Post_Data_modifyDefect |

158 |

136 |

| CCM WI HOME |

610 |

608 |

| Search Defect By Number |

158 |

133 |

| OpenCreateTestScriptPage |

91 |

106 |

| Post_Data_createTestScript |

90 |

101 |

| Refresh Dashboard Page |

81 |

86 |

| RQM_WorkItemDropdown |

193 |

200 |

| createDefectDialog_createDefect |

193 |

202 |

| Post_Data_createDefect |

194 |

211 |

| ViewTERsPage |

214 |

227 |

| SearchTER |

907 |

898 |

| ClickNext |

898 |

891 |

| openPreviousResults |

895 |

885 |

| ClickLatestResult |

901 |

881 |

| SortbyMilestone |

900 |

898 |

| Search Defect By Number |

602 |

617 |

| openDefect |

610 |

618 |

| SearchForString |

32 |

44 |

| OpenFoundItem |

31 |

45 |

| Open TestCase Page |

13 |

12 |

| Search TC By Name |

14 |

12 |

| Select All TestCases |

15 |

13 |

| Change Owner |

16 |

13 |

| LoadNewTestPlanForm |

4 |

5 |

| Post_Data_createTestPlan |

4 |

5 |

| OpenCreateTestCasePage |

45 |

47 |

| Post_Data_createTestCase |

45 |

48 |

| Open TestPlan View |

93 |

91 |

| Search TP |

99 |

91 |

| Open TP |

96 |

91 |

| Review Test Objects |

93 |

90 |

| Review Requirement |

90 |

92 |

| Review Schedule |

93 |

96 |

| Review Estimation |

98 |

95 |

| Review Quality Objective |

100 |

94 |

| Review TestCases |

100 |

92 |

| TestCase View |

99 |

90 |

| Search TestCase |

104 |

88 |

| Sort By State |

101 |

89 |

| Click Next |

97 |

88 |

| Open TestCase |

99 |

87 |

| Review TestScript |

96 |

85 |

| OpenViewTestPlanPage |

3 |

4 |

| SearchTPbyName |

3 |

4 |

| SelectTPandDuplicate |

2 |

3 |

| startDuplicate |

2 |

3 |

Resource utilization

OS resource utilization 6.0 vs 5.0 - overview

| |

6.0 vs 5.0 |

| CPU |

|

| Disk |

|

| Memory |

|

| Network |

|

QM server resource utilization 6.0 vs 5.0

| |

6.0 |

5.0 |

| CPU |

|

|

| Disk |

|

|

| Memory |

|

|

| Network |

|

|

DB server resource utilization 6.0 vs 5.0

| |

6.0 |

5.0 |

| CPU |

|

|

| Disk |

|

|

| Memory |

|

|

| Network |

|

|

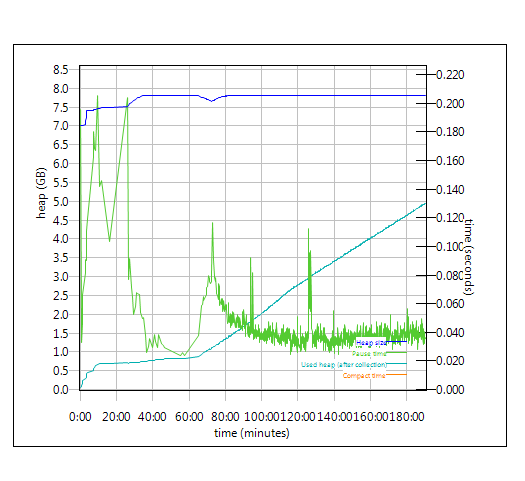

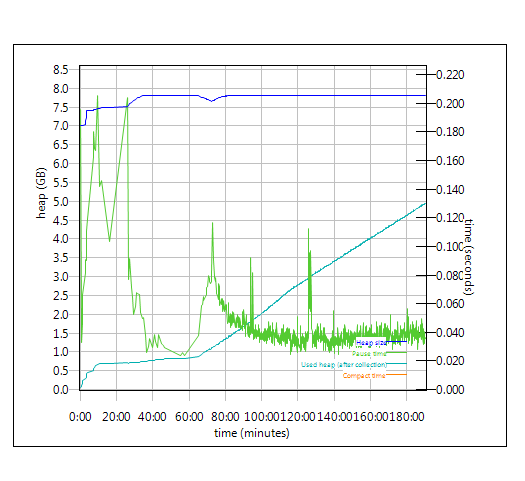

Garbage collection - JVM GC Chart

For JVM parameter please refer to

Appendix A

Verbose garbage collection is enabled to log the GC activities. These GC logs showed very little variation between runs. There is also no discernible difference between versions. Below is one example of the output from the GC log for each application.

WAS JVM Garbage Collection Charts 6.0 vs 5.0

| |

6.0 |

5.0 |

| QM |

|

|

| JTS |

|

|

Appendix D - Key configuration parameters

Product

|

Version |

Highlights for configurations under test |

| IBM HTTP Server for WebSphere Application Server |

8.5.0.1 |

IBM HTTP Server functions as a reverse proxy server implemented via Web server plug-in for WebSphere Application Server.

Configuration details can be found from the CLM infocenter.

HTTP server (httpd.conf):

OS Configuration:

- max user processes = unlimited

|

| IBM WebSphere Application Server Network Deployment | 8.5.0.1 | JVM settings:

- GC policy and arguments, max and init heap sizes:

-Xgcpolicy:gencon -Xmx8g -Xms8g -Xmn2g -Xss786K -Xcompressedrefs -Xgc:preferredHeapBase=0x100000000

-verbose:gc -Xverbosegclog:gc.log -XX:MaxDirectMemorySize=1G

Thread pools:

- Maximum WebContainer = Minimum WebContainer = 500

OS Configuration:

System wide resources for the app server process owner:

- max user processes = unlimited

- open files = 65536

|

| DB2 |

ESE 10.1.0.3 |

|

| LDAP server |

|

|

| License server |

|

N/A |

| RPT workbench |

8.2.1.5 |

Defaults |

| RPT agents |

8.2.1.5 |

Defaults |

| Network |

|

Shared subnet within test lab |

About the authors:

HongyanHuo is a performance engineer focusing on the scalability of products in the Collaborative Lifecycle Management family.

VaughnRokosz is the performance lead for the CLM product family.

Questions and comments:

- What other performance information would you like to see here?

- Do you have performance scenarios to share?

- Do you have scenarios that are not addressed in documentation?

- Where are you having problems in performance?

Warning: Can't find topic Deployment.PerformanceDatasheetReaderComments

There are improvements in dashboard loading, test plan loading, and editing of various artifacts.

The one operation which has degraded is still relatively fast (around 1 second total):

There are improvements in dashboard loading, test plan loading, and editing of various artifacts.

The one operation which has degraded is still relatively fast (around 1 second total):  The specifications of machines under test are listed in the table below. Server tuning details listed in Appendix A

The specifications of machines under test are listed in the table below. Server tuning details listed in Appendix A

Back to Test Cases & workload characterization

Create Test Plan

Back to Test Cases & workload characterization

Create Test Plan

Back to Test Cases & workload characterization

Create Test Case

Back to Test Cases & workload characterization

Create Test Case

Back to Test Cases & workload characterization

Create Test Script

Back to Test Cases & workload characterization

Create Test Script

Back to Test Cases & workload characterization

Browse Test Plans & Test Cases

Back to Test Cases & workload characterization

Browse Test Plans & Test Cases

Back to Test Cases & workload characterization

Browse Test Scripts

Back to Test Cases & workload characterization

Browse Test Scripts

Back to Test Cases & workload characterization

Bulk Edit of Test Cases

Back to Test Cases & workload characterization

Bulk Edit of Test Cases

Back to Test Cases & workload characterization

Defect Search

Back to Test Cases & workload characterization

Defect Search

Back to Test Cases & workload characterization

Defect Modify

Back to Test Cases & workload characterization

Defect Modify

Back to Test Cases & workload characterization

Edit Test Case

Back to Test Cases & workload characterization

Edit Test Case

Back to Test Cases & workload characterization

Edit Test Environment

Back to Test Cases & workload characterization

Edit Test Environment

Back to Test Cases & workload characterization

Edit Test Plan

Back to Test Cases & workload characterization

Edit Test Plan

Back to Test Cases & workload characterization

Edit Test Script

Back to Test Cases & workload characterization

Edit Test Script

Back to Test Cases & workload characterization

Full Text Search

Back to Test Cases & workload characterization

Full Text Search

Back to Test Cases & workload characterization

Simple Test Plan Copy

Back to Test Cases & workload characterization

Simple Test Plan Copy

Back to Test Cases & workload characterization

Test Execution For 4 Steps

Back to Test Cases & workload characterization

Test Execution For 4 Steps

Back to Test Cases & workload characterization

Test Execution Record Browsing

Back to Test Cases & workload characterization

Test Execution Record Browsing

Back to Test Cases & workload characterization

View Dashboard

Back to Test Cases & workload characterization

View Dashboard

Back to Test Cases & workload characterization

View Dashboard with Login

Back to Test Cases & workload characterization

View Dashboard with Login

Back to Test Cases & workload characterization

Back to Test Cases & workload characterization